For decades, software has been built around one assumption: a human has to operate it.

That assumption explains almost everything about modern software. Buttons. Menus. Forms. Dashboards. Search bars. Tabs. Notifications. Every layer of software has been designed to help a person translate intention into action.

But that assumption is starting to break.

AI agents are changing the shape of computing because they do not need software in the same way humans do. If an agent can understand a goal, plan a sequence of actions, use tools, call APIs, navigate systems, and coordinate with other agents, then the user interface stops being the center of software.

The next major shift in computing may not be better apps. It may be fewer of them.

That does not mean apps disappear overnight. It means their role changes.

The most important interface of the next software era may not be a screen full of controls. It may be an agent that acts on your intent.

Two ideas explain why this shift matters

Two recent lines of thinking help make sense of what is happening.

The first is the idea of the Agentic Web: a web where autonomous agents do not just retrieve information, but perceive, reason, coordinate, and act across long-horizon tasks on behalf of users. In that model, the web is no longer just something humans browse. It becomes an environment where software entities operate.

The second is the idea behind building the web for agents: today’s websites are built for human eyes and hands, not for machine actors. Agents can use them, but only awkwardly, by interpreting interfaces that were never designed for them in the first place.

Together, these ideas push the conversation beyond chatbots and prompt boxes. They point to something deeper: software organized around outcomes instead of navigation.

The interface was a workaround for human limitations

To understand why this matters, it helps to look at what apps actually are.

An app is not the software itself. It is a translation layer. It takes the complexity of business logic, permissions, workflows, databases, and integrations, and compresses all of that into something a human can click through.

That was a brilliant design for a world where humans had to manually drive every workflow.

You want to book a trip? You open tabs, compare flights, check dates, fill forms, confirm prices, enter passenger details, then repeat the process for hotels, transfers, and calendar updates.

You want a market analysis? You search multiple sources, open reports, compare notes, build a spreadsheet, create charts, write a summary, and send it to your team.

The modern web was built around this pattern: humans navigate, humans choose, humans execute.

Apps exist because humans need interfaces.

That sounds obvious until you flip it:

AI agents do not need the same interfaces humans do.

That is where the ground starts moving.

The real shift is not from clicking to chatting

A lot of people still frame the AI transition like this: instead of clicking buttons, we type prompts into a chat box.

That is too shallow.

The real shift is not from clicking to chatting. It is from operating software to delegating intent.

That is what makes agents fundamentally different from chatbots.

A chatbot answers a question. An agent carries out a task over time. It can hold context, break a goal into subtasks, call tools, recover from intermediate failures, and keep going until it reaches an outcome.

That is a very different model of computing.

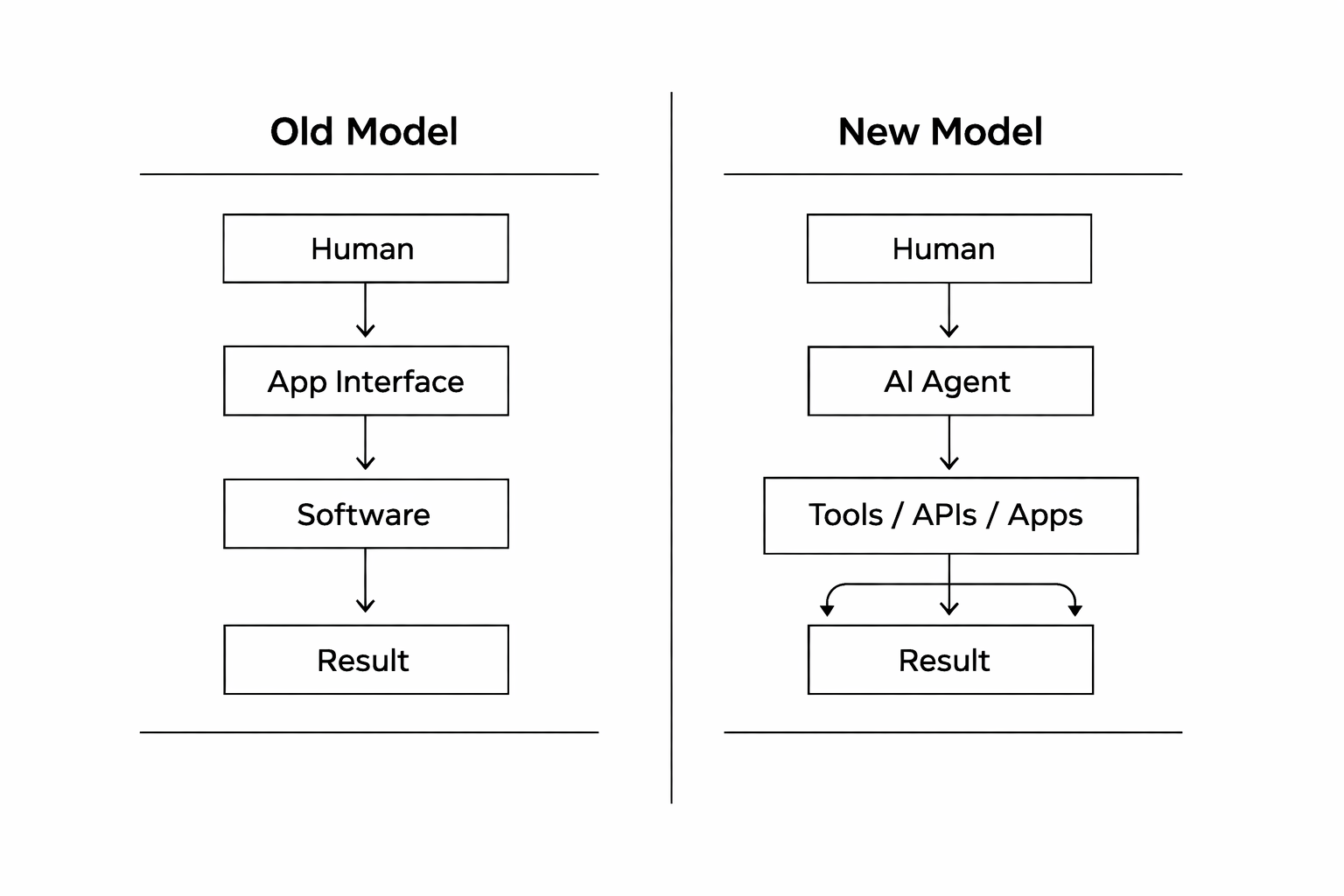

The old model looks like this:

This is not a UX tweak. It is a new interaction paradigm.

The app is no longer the main destination. It becomes part of the execution layer.

The user no longer has to know which system to open first, which fields to fill, which dashboard to inspect, or which API to query. The user states a goal. The agent figures out the path.

That is a much bigger shift than replacing clicks with prompts.

From assistants to coworkers

This is also why the label “AI assistant” already feels too small.

The more useful framing is closer to coworker.

A tool waits for direct input. An assistant responds. A copilot suggests. A coworker takes ownership of part of the workflow. An autonomous operator executes bounded tasks with minimal supervision.

That progression matters because once software includes entities that can actually do things, the traditional UI becomes less important.

A finance agent does not care about a beautiful expense dashboard. It cares about permissions, policies, access to systems, and a reliable way to execute actions.

A research agent does not care about browsing twenty pages the way a person does. It cares about source access, context persistence, retrieval quality, and a reliable way to aggregate findings.

A coding agent does not get much value from polished menu hierarchies if it can already inspect a repository, run tools, create branches, test outputs, and submit controlled changes.

The screen still matters, but for different reasons. Review, oversight, trust, exception handling, and approval remain essential. What changes is that the screen is no longer the core execution environment.

Why today’s web is badly designed for agents

This is where the argument gets more technical, and more interesting.

The current web is fundamentally misaligned with agent behavior.

Today’s websites are designed primarily for human consumption. That means agents often have to scrape HTML, parse DOM structures heuristically, infer actions from layout, or even analyze screenshots just to understand what they are allowed to do.

That is brittle, inefficient, and often insecure.

The problem is not just that agents need better prompts.

The problem is that the web still speaks human UI.

If I design a travel site for a person, I expose calendars, dropdowns, fare cards, filters, seat selectors, and checkout flows. A person understands those through visual design and interaction patterns.

But an agent does not need visual hints. It needs explicit capabilities:

- what actions are available

- what state matters

- what permissions apply

- what data can be read

- what outcomes can be committed

This is why frameworks like VOIX matter. They point toward a world where websites expose machine-readable capabilities directly, instead of forcing agents to reverse-engineer screens designed for humans.

That is one of the clearest signals that the future of software is not just AI inside apps.

It is software built for agents.

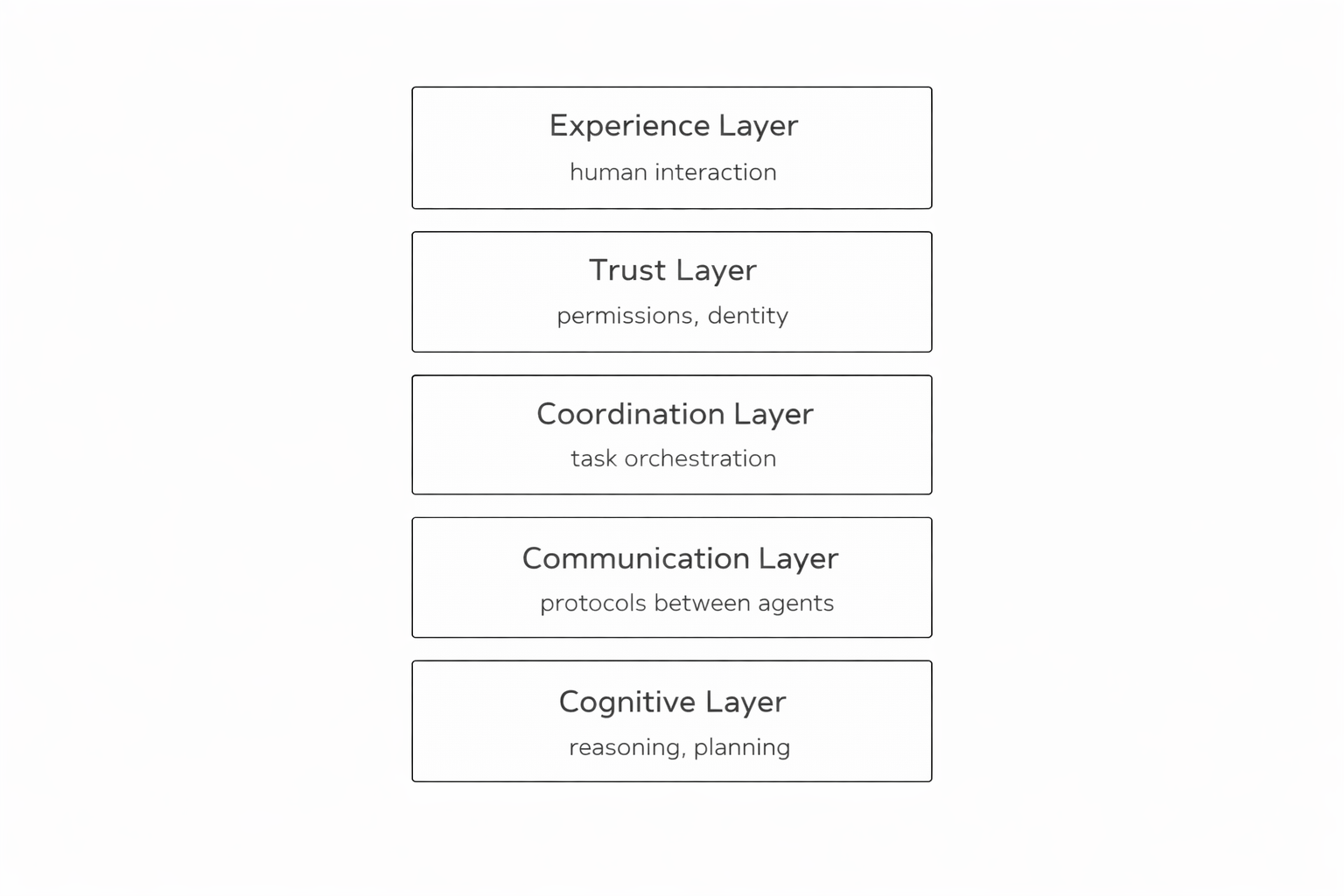

The rise of the agentic stack

Once you stop thinking of the interface as the product, a different architecture starts to appear.

One useful way to think about it is as a layered stack:

This framing matters because it shows that agents are not just LLM wrappers with tool calls attached. They are part of a broader operating model.

Reasoning without coordination is not enough. Coordination without trust is dangerous. Trust without a usable experience is unusable. And experience without machine-readable execution underneath is still just another interface.

That is the deeper meaning of “the end of apps.” Not that all screens vanish, but that the interface becomes one layer among several rather than the whole product.

One agent will not replace apps. Teams of agents will

Another misconception is that the future looks like one super-agent doing everything.

That is probably not how this evolves.

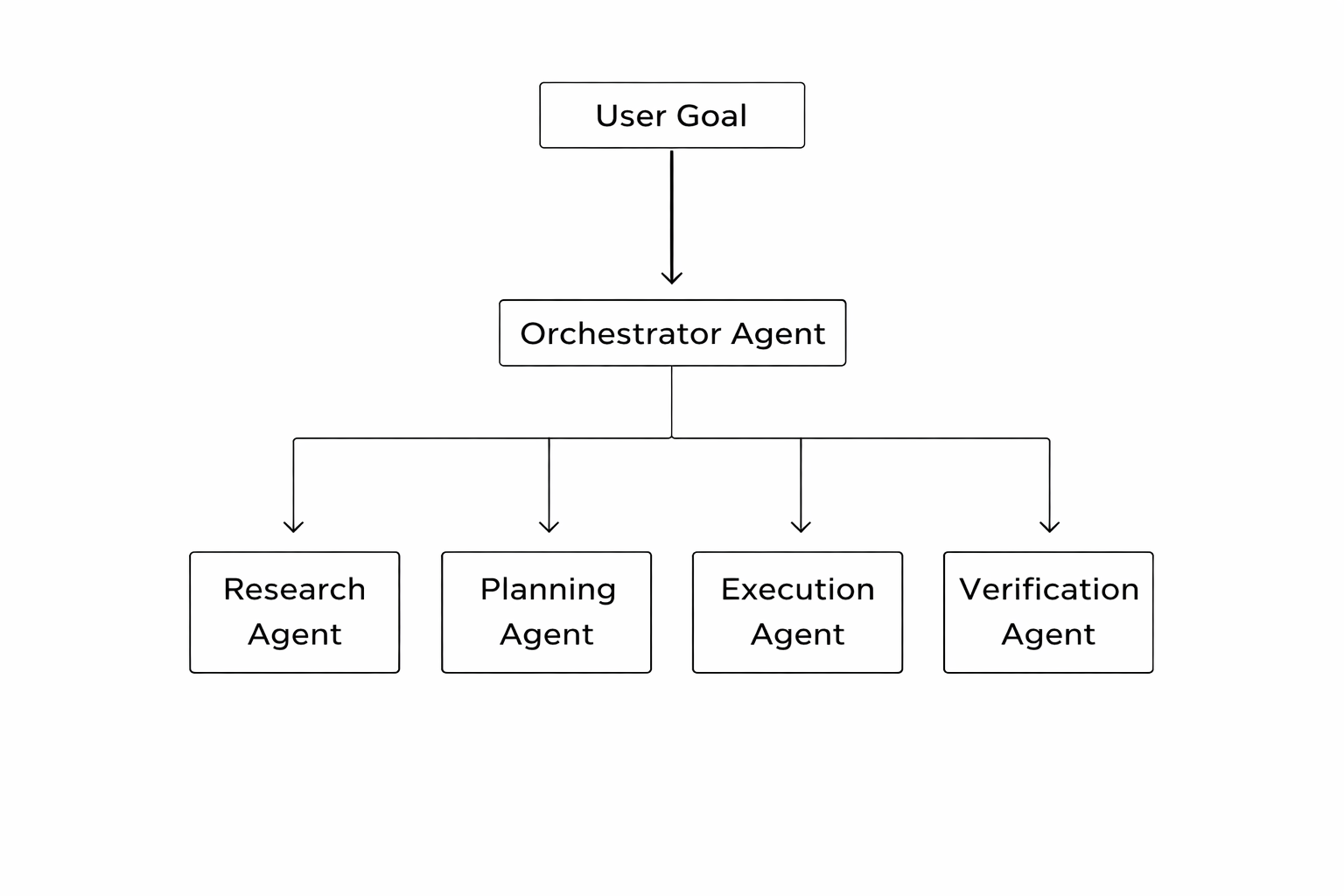

The more credible model is a team of specialized agents working together.

In practice, that could look like this:

This is much closer to how real organizations operate.

A coordinator agent can assign research to one specialist, planning to another, execution to another, and validation to a separate control layer. The value is not magic. The value is orchestration.

That matters because once workflows are distributed across specialized agents, the interface matters less as the central object. What matters more is discoverability, coordination, identity, policy, and trust.

Software starts to feel less like navigation and more like delegation.

Real-world software is already bending in this direction

This shift is not purely theoretical.

You can already see the pattern in enterprise workflows: invoice ingestion agents, OCR agents, compliance agents, approval workflow agents, integration agents, coding agents, support agents, research agents. None of these replace entire organizations. But they are starting to absorb bounded units of work that previously required people to manually drive software through front ends.

Even in software development, one of the most discussed AI use cases right now, the strongest pattern is not total autonomy. It is controlled delegation.

Developers are not handing over entire products without oversight. They are using agents to accelerate execution, inspect codebases, run tests, propose changes, monitor pipelines, and handle constrained tasks under supervision.

That is an important reality check.

The future is agentic, but it is not careless.

What will not disappear

This is where the argument needs to stay grounded.

The future is not a world with no interfaces.

Interfaces will still matter when risk is high, when approvals are required, when ambiguity is high, when users want to explore rather than delegate, and when the experience itself is part of the value.

A surgeon will not delegate critical judgment to an opaque agent.

A CFO will still want review points before major commitments.

A traveler may still want to compare options manually, not because it is efficient, but because the experience of choosing matters.

That is fine.

The point is not that humans leave the loop. It is that many workflows stop being interface-first.

The interface does not disappear. It becomes a checkpoint, a review layer, a trust surface, and sometimes a fallback.

The end of apps is really the end of app-first computing

So what does “the end of apps” actually mean?

It means apps stop being the primary unit of interaction.

Today, software is organized around destinations: open this app, then that one, then another.

Tomorrow, more software will be organized around outcomes: state the goal, let agents coordinate systems, then review or approve the result.

That shift changes product design.

It changes web standards.

It changes enterprise architecture.

It changes how we think about UX.

And it changes what software even is.

For forty years, the interface was the place where value happened.

Now value is moving downward, into orchestration, protocols, reasoning loops, trust systems, and machine-readable actions.

The center of gravity moves elsewhere.

For decades, software was designed around what humans could click.

The next generation of software will be designed around what agents can do.

And once that shift happens, the interface is no longer the product.

It is the surface.

References

Cogent Labs. (2025). The Agentic Web: A Network of Autonomous AI Agents on the Rise.

CIO. (2026). Taming AI Agents: The Autonomous Workforce of 2026.

Mysore, V. (2026). Beyond the Chatbot: The Rise of the AI Agentic Web.

Microsoft Research. (2025). Toward an Agentic-Infused Software Ecosystem.

VOIX Research. (2025). Building the Web for Agents.

Wang, J., et al. (2025). Agentic Web: Weaving the Next Web with AI Agents.

Zhang, L. (2025). AI Agents Are Your New Coworkers.

Singh, R. (2025). AI Agent Use for Coding in 2025.